I’ve been previewing Citrix Performance Analytics – what are my first impressions of this service?

I’ve spoken and written many times about the absolute importance of gathering client-side metrics in any estate, and cloud makes it even more important. Cloud services introduce variables that often we can’t control, such as moving from corporate devices to full “access from anywhere”, or the traversal of network routes we don’t fully understand. With this in mind, the visibility into the user experience, or “UX”, becomes ever more vital. I’ve said it many times, and I will say it again – the performance monitoring of the user endpoint is at least as important as every other infrastructure component, and being able to proactively respond to performance degradation is crucial. If you don’t identify and react to bottlenecks that affect the overall UX, then your users will give your solution a bad name – and as every social media user in the world knows, once you give something a poor reputation (whether it be deserved or not), it is very difficult to get rid of that stigma.

Now every project I’ve ever worked on I have (at project inception) been absolutely determined to do it right. Doing it right includes putting in a slick, comprehensive, customized monitoring solution that accurately gathers data on every infrastructure component and presents it in an easy-to-use format benchmarked against industry standards.

Of course, the real world doesn’t make things that easy for us. Putting in monitoring solutions often involves a lot of time, effort and cost. We need to come up with business justification (and this is a very big hurdle – any solution needs to have business-wide operational value, not just help with the project in hand), design documents, implementation plans, vendor comparisons, etc. And even if we can get past the procurement and deployment pre-requisites (which can be very heavy), then we have to handle implementation and all which that entails. Customizing the solution, making it resilient, assessing any impact, running tests – we all know there are myriad tasks involved that will take time to get done. And even if we can achieve all of that – then we have to make sure we are providing good data, actionable data, that is of real value to the people looking at it. And as with many projects I’ve worked on, time is often of the essence and the intransigence of business processes means we can’t afford the time it would take to procure, deploy, test, customize and validate this wonderful monitoring solution we would like to have in place.

So often monitoring just gets backburned. And then we’re stuck in a rabbit-hole, because now we don’t see where our problems are. And then our users start complaining. And…..you know the drill. We’ve all been there.

On my current project, this is exactly what happened. Now you can argue until you’re blue in the face that this is a failure on a planning or a process level, but this sort of situation is commonplace. And it’s not like we just sent it live without anything to help us – obviously we have Director in play, we have a slew of monitoring agents that can provide data on specific areas of interest – but there’s nothing that consolidates and presents the data, that aggregates it together and gives it meaning. Director is useful, but mainly from an admin perspective of looking into particular sessions or workers, and it has always been (to me anyway) used mainly as a point-in-time tool. You can look at trends, but you need to work to produce the reports you want and extract pertinent data from them. As for other agents that we have present – they do jobs in their own ways. We can, for instance, view network utilization and performance data, but it isn’t presented in such a way that helps us out in seeing whether “the Citrix solution” is suffering from a network problem – often, we use it reactively, seeing a problem reported and then delving into this tool to see if the network is the issue.

So, with all this in mind (and I do apologise for the convoluted introduction, but I think it is important to set the scene), I was very excited when Performance Analytics became available to us. We’ve had access to Security Analytics for a while, but this was something new, and given that I was spending a lot of time firefighting against performance issues – and, very importantly, trying to gain some understanding that was based on something other than hearsay – I immediately wanted to get it activated.

Setting up

How difficult was it to get Performance Analytics set up? Well, it was remarkably easy – I submitted my orgID to Citrix, they activated the service, and I simply waited for it to start showing me data. Now I know a lot of you are thinking “well yeah genius, it’s a cloud service, it’s going to be easy to set up”, but in comparison to on-premises deployments, it was just so awesome to be able to flip a switch and see something start working. And even other monitoring systems I’ve deployed into cloud hosting have often involved having to install or provision something, so to be able to simply activate this with an email was refreshingly good.

Of course, it was all so easy for me because we don’t (yet!) have any on-premises Virtual Apps and Desktops deployments we want to add to it. It’s important to note this, that it’s not just a cloud offering – you can use Performance Analytics to monitor on-prem or Cloud Sites. You can use this offering whether you are a pure on-prem customer, a Cloud customer, or a hybrid customer with a mix of on-prem and Cloud Sites. You will need to set up a Citrix Cloud account to get access to the Analytics service, and then onboard them through Director. As long as your Delivery Controllers and the Director console have outbound internet access, and are on version 1909 or later, the setup process should still be vastly less troublesome than what you are used to.

Visuals

So how does it look once there is data present? Upon entering the Analytics management view and choosing Performance, you are presented with two main tabs, Users and Infrastructure.

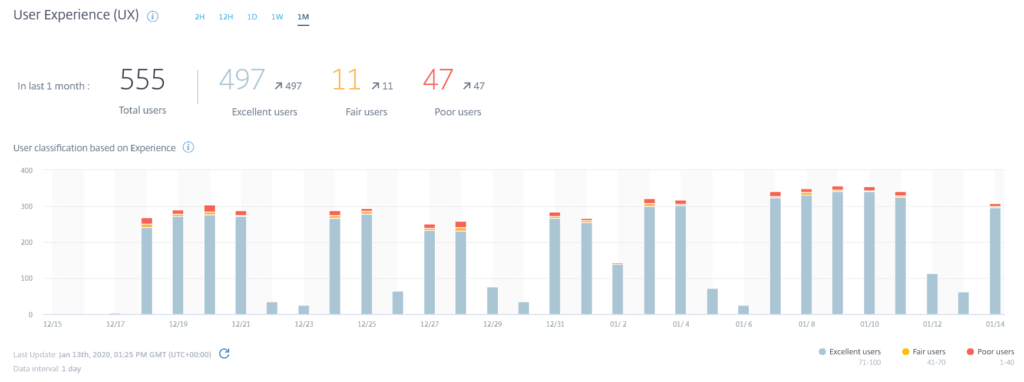

The Users tab is presented first and this is ideal because it concentrates very much on the UX, which is often of primary interest. It measures four main areas currently – overall User Experience index, User Session information (totals and total failures), Session Responsiveness (representative of ICA round trip time), and Logon Duration. It defaults to showing you the details of these from the last two hours – I prefer to increase this up to the last month, so it would be nice to be able to change the default view in here.

It’s also really good that this provides an aggregated view of the health of the user experience at a glance. Often you have to spend a lot of time setting up views and baselines within monitoring tools before you can start seeing the data, but here, you already have the data organized along some best practice guidelines. It would obviously be nice to be able to customize the index that generates the UX score and the views so we can present an operational dashboard that’s tailored to our environment, but this is the first step – an overall view into the health of the user experience. As we had been running for so long without any monitoring of this sort, I had spoken to a lot of users and was aware that most of them were pretty satisfied with the performance, so the percentage of users with Excellent performance (89%, as opposed to 8% with Poor performance) was pretty much in line with what I was expecting.

I’m not sure how often analytics updates its views – but it must be quite regularly, as you can break it down as far as 15 minute intervals when you are looking at the data. It would be useful to have some insight into the refresh intervals and maybe do some customization of it.

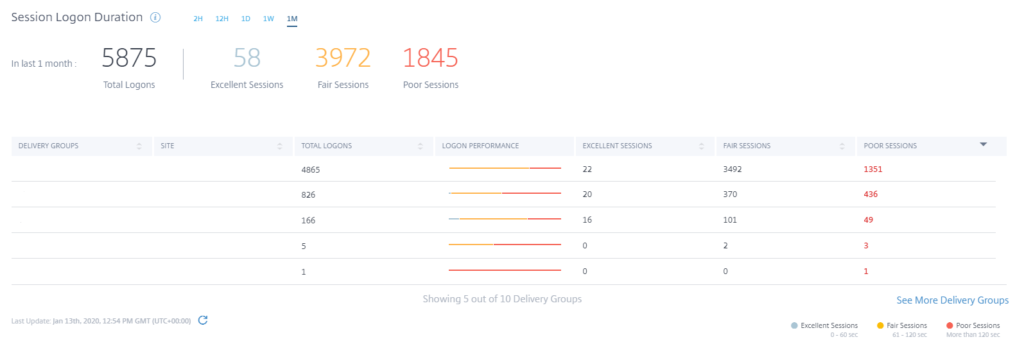

Logon duration also provided some interesting and useful data. My users had always told me that the logon experience was really good, but my logons seemed a bit sluggish to me. It turns out I was right – the reason the users thought it was good was because they were being migrated from a platform where the logon experience was terrible, and this new cloud solution seemed much more responsive, whereas I expected my logon to be a lot snappier. The statistics from Performance Analytics bore me out – the majority of the logons, on a Citrix best-practice level, were simply Fair, rather than Excellent, so this provided vindication for me and allowed me to convince them that I should spend some time working on logon optimizations.

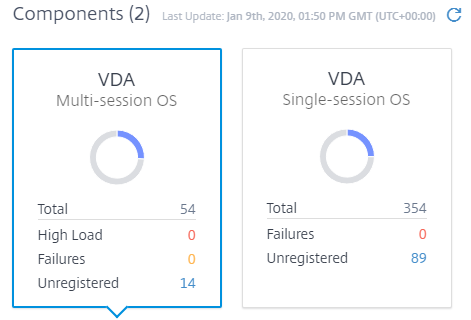

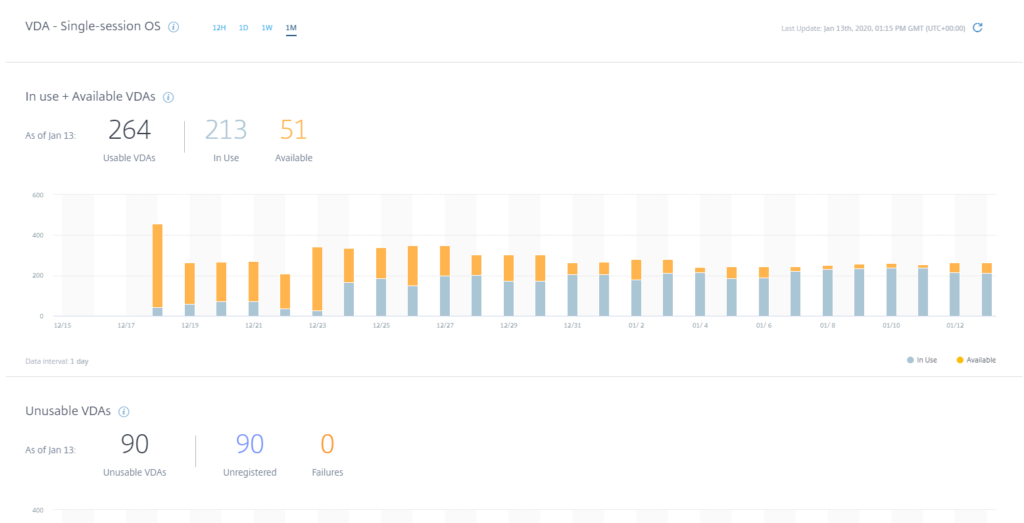

The Infrastructure tab offers a basic overview of the health of the Citrix infrastructure, divided into two main tabs for Multi-Session OS and Single-Session OS. I’d like to see a lot more in here such as details on Cloud Connector health, component versions, etc., but for now it gives you a quick insight into the health of your VDAs.

You can see what the load is on your VDAs, how many are in use and available, how many are unusable, how many are unregistered, how many are in maintenance mode, and a breakdown by Delivery Group. Again, this is just a really useful snapshot that you can use to gauge the overall health.

Impressions

So what do I think of Performance Analytics?

I have found this really useful in my current role. I’m not involved in day to day administration of the Citrix Cloud infrastructure but I am regularly asked for certain details on it, such as how many people are using the system or whether it is meeting expectations. A dashboard like this is excellent for that. If you are an administrator looking to dive deep into the weeds and identify specific bottlenecks on a very granular level, then obviously, Director is where you need to be.

However it’s not to say Analytics in this guise doesn’t have use for troubleshooting. We have many problems reported in the environment and often we have to investigate every single part of the infrastructure to troubleshoot them. Performance Analytics, though, allows us to concentrate on the right areas more quickly. For instance, analytics reports that most of the failures we suffer are due to client connection issues, so we are concentrating less on the Citrix and cloud back-end systems and looking much more closely at client considerations such as the Receiver/Workspace App version, or the kind of device being used to connect.

Having said that, it is fairly limited at the moment. I’d love to see many more views and metrics added to this and I’d love to be able to drill into the displayed reports to get more detail. I think it is a good measure of potential to see how often you click on parts of the interface trying to see if you can dig deeper into the data, and in the Analytics console, yes, I do a lot of clicking.

I would love to see Performance Analytics develop and grow so that it offers a one-stop-shop for an at-a-glance measure of the health of the Citrix Cloud solution. We often sell Citrix Cloud into the business as an off-the-shelf method of accessing desktops from anywhere, and to provide access to a “status page” without any setup or baselining is a pretty tempting proposition. The fact that we can simply activate it and watch the data come in is also really refreshing for a person who is used to going through lengthy design and approval processes simply to stand up a PoC!

In summary – Performance Analytics has made a nice start, and shows a lot of potential. Here’s hoping it gets the attention it needs to grow and shine. It’s fully available now – see https://www.citrix.com/blogs/2020/01/13/get-a-deeper-view-into-ux-with-citrix-analytics-for-performance/ for further details.

![]()

Thanks for sharing this detailed reviews. I’d like to know how does Citrix analytics for performance stand against other Citrix monitoring tools like eg enterprises , dynaterace etc. Have you done any comparitive analysis of this with any other existing Citrix apms