Put bluntly, application delivery is quite simply all that an IT function does. There are many other aspects that wrap around this – security, usability, management, resilience; the list can go on and on – but at the core, the myriad pieces within the information technology stack exist simply to serve applications to their users. Even the operating system is simply one more part in a long line of commodities that join up to form the service we provide, a service which at its fundamental base puts applications and their associated data into a place from which users can access them and perform their required business functions.

Shortened version – applications are everything.

Over the many years we have been delivering applications, whole ecosystems have sprung up both to facilitate and manage said applications. As the years go by, these ecosystems have grown in terms of diversity and complexity. This becomes even more noticeable as companies expand by acquisition or merger and have to fold different delivery methods into existing ones. Bespoke solutions often spring up to fill specific gaps, and become deeply embedded into particular parts of the delivery strategy. Business requirements and security mandates evolve over time, meaning that the application delivery ecosystem undergoes significant change to meet these challenges and becomes not just bloated and complicated, but extremely unwieldy.

This is not helped by the fact that the users of today are radically different in their behaviour and their “consumption” of applications. The “user journey” has changed significantly from the time when enterprises first started to put together their deployment frameworks. Users no longer exclusively fall into “monolithic” categories with specific sets of enterprise apps – users can have specific required applications, optional applications, even applications and functions driven by software they have chosen or even developed themselves. Each user persona within the enterprise is both nuanced and possibly quite fluid, and additionally each may run applications from a multitude of different devices – PCs, mobile devices, tablets, virtual machines, cloud services, hosting platforms, connectors, etc.

Typically, larger enterprises began their forays into application delivery frameworks with significant investments into technology such as Microsoft’s Configuration Manager and Active Directory. These systems became the base starting point for their application delivery strategy. These technologies are very much products of the age they were birthed in – times when users had one physical device on one allotted desk and were provided with an immutable, unchanging, non-negotiable stack of business applications. Commonly, over time multiple other vendor products as well as custom solutions would very often be embedded into this to enable new functionality. Provisioning of applications to users from these delivery tools is very often device-centric and time-consuming, meaning that changes to users’ application entitlements can often be slow, onerous and intrusive. An over-reliance on a drawn-out “packaging process” is also frequently observed (sometimes with multiple technology layers in use), meaning that there is a long delay between the request for a new application and the actuality of a user being able to launch and utilize the software.

The rise of Software-As-A-Service (SaaS), cloud services, and modern automated “devops” approaches mean that the legacy application delivery methods such as those described above are struggling to keep up with the modern user base which behaves in the “fluid” fashion we described earlier. Enterprises often do not fully understand exactly “how” their users work – what they are doing, what they are connecting to, what access each application or service requires. Faced with this lack of knowledge as to the fine details of the applications they must provide and how the users they support now work, and armed only with a toolset that was originally designed for enterprise environments that looked markedly different to those we are faced with today, it is small wonder that administrators now struggle to provide any kind of dynamic service to their user bases.

The “unpicking” of this bloated, intertwined and cumbersome application delivery framework can be a daunting task, often with no immediately obvious business benefit. However, if it can be achieved – even within a reduced scope of particular applications – there are many tangible advantages that can be realized. A few of these are summarized below:-

- Single scalable delivery framework that can be extended as required

- Single packaging process – package once, run anywhere

- Single, simple update process for all applications across the estate

- Software aligns across physical/virtual, or any operating system type

- Deployment of applications in the context of the user, not the device

- Dynamic assignment and unassignment of software packages

- Applications delivered without advertisement, interference, restarts or dependencies

- Applications “self-served” from a single portal or “app store”

- Applications delivered ready for user consumption

- Applications remain available regardless of user device or access point

- Applications delivered to servers as well as client devices

To facilitate these benefits, a pivot is required away from existing “legacy” delivery frameworks towards something more aligned to the agile modern world. Technologies have grown to address the needs of the modern user base, and concepts such as software repositories, package managers and infrastructure management tools should be areas of interest when overhauling an application delivery framework.

The concept of a “software repository” is the first building block in this new approach. The “repo” should provide all required software, be able to be easily updated, support permissions and version control, and provide a “portal” that users can self-serve applications through a GUI, as well as providing full scripting support so that automated build processes can similarly locate and install the required applications – whether by name or by function. The flexibility of this repository is key – that it should be able to serve all use cases, from automated server recovery scripts to developers installing their favourite tools to a new user having their new device provisioned ahead of shipping.

Repositories of this type can be organizational, community, shared, cloud-based or internal – or even a mix of all the above. Technologies that can provide such a repository include, but are not limited to, such solutions as Artifactory, Nexus, Cloudsmith, ProGet, and the like.

The repository then handles a required number of “package managers” which deliver applications to specific target types. For instance, Windows package managers are the likes of Chocolatey and WinGet, for Linux you could use tech such as Yum, Apt or RPM, and on MacOS you have options like Brew or Nix. The beauty of such package managers is that they manage all software, not just software that runs through conventional installers. For instance, on Windows you can have more than twenty different installer technologies, without even counting other methods of deployment like runtime binaries, scripts or zip files that can also place software onto a target. Wrapping these up in a package manager allows you to have full delivery, auditing and version control of each type – even applications that don’t traditionally “install”.

Continuing with the example of Windows package managers such as Chocolatey, once installers are placed within this framework they use the nupkg (NuGet) format which ensures that each application is completely self-contained. Converting existing installers to nupkg files is simple – Chocolatey simply calls a conversion module that takes a few minutes to run. In order for target devices to support the package installations, a single line of PowerShell needs to be run as part of the build process.

The package managers can then either be used directly to do the deployment onto clients and servers, or you can use dedicated infrastructure management tools and/or CI/CD pipelines to have a fully automated path to production delivery. Components within these pipelines and infrastructure management tools include technology like Chef, Puppet, Ansible, Terraform, Jenkins, Git, Configuration Manager, InTune, Altiris, Chocolatey Central Management, etc. etc. – there are many ways that this could be handled, and it should align with practices already adopted within the enterprise. Continuing with the example of Chocolatey as a Windows-based package manager, I personally prefer to use Microsoft InTune as the deployment tool for clients and Ansible for servers – but as stated previously, this can be delivered in countless different methods and configurations. This is another benefit of using these kinds of technologies for application delivery – it can be customized to the exact requirements of, and tools within, an enterprise estate, rather than being limited to inflexible “best practices” recommended by a single vendor with total control of the solution. Rather than coupling a rigid application deployment framework to the business, we create a flexible application deployment framework to our specific requirements which can grow and expand as we need it to.

Once formulated, the framework and the tools within it can be joined to robust build processes embracing the concepts of infrastructure as code. All applications should pass through a triaging process that identifies the best delivery mechanism for them, and then packages them up into a future-proofed target format that allows us to deliver them as single lines of code within build or post-build scripting. The benefits of this code-driven model allow the nirvana of a stateless “evergreen” model to be within reach, as both clients and servers can be rebuilt and reloaded without any interruption to service. The benefits of an end-to-end deployment framework that covers the rapid provisioning of devices and all the user’s required applications are many – they include, but are not limited to, the below:-

- Less requirement for patching

- Removal of human error from build processes

- Rapid redeployment improves security stance (e.g. for removing APTs or recovery from ransomware attacks)

- Quicker update process

- Single image for each operating system type

- Environments can be scaled rapidly up or down

- Users can use any device

Adopting this approach also has another huge benefit – in that the code and infrastructure management components can readily be lifted and shifted between hosting areas. This aligns again with future-proofing your framework – who knows where you will be delivering devices and applications from ten years from now? By putting together a solution that supports industry standards for automated provisioning, we can ensure that we can plug into any underlying platform and achieve the same results.

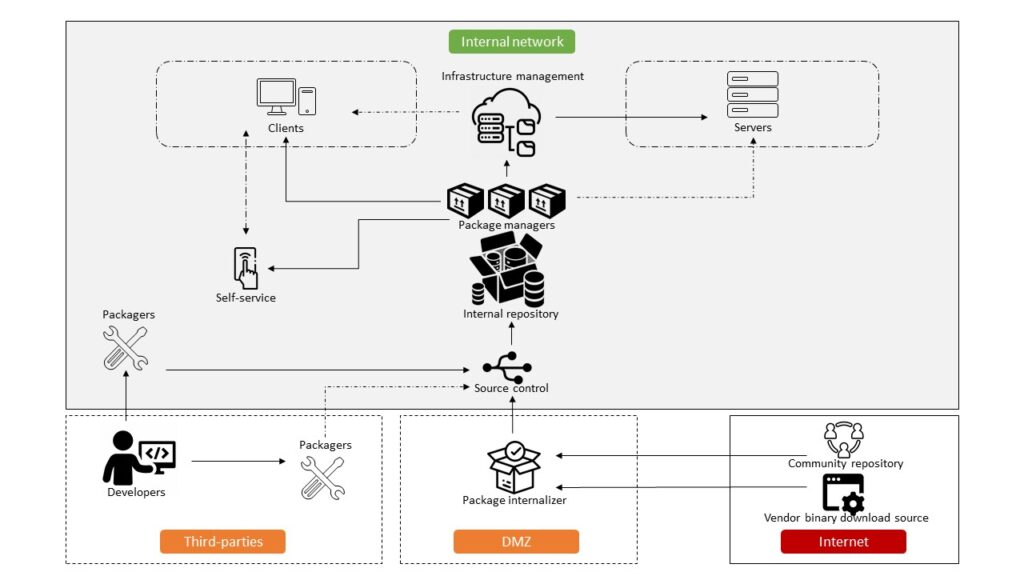

What might such an architecture look like? Given that there are so many possibilities by mixing and matching the available tools and formats, it’s difficult to pin something down at such a high level – but here’s an example showing a conceptual take on how you could deploy applications within a hypothetical enterprise estate.

In summary, for an enterprise to become truly agile, to deliver applications to the user base in a way that enables them rather than handicaps them, we need to take a long hard look at adapting to the modern world and embracing the technologies that are out there.

![]()