Everything you need to know about Windows logons in one blog series starts right here!

I have threatened on several occasions now to do a follow-up to my previous article on Windows logon times which incorporates the findings from my “logon times masterclass” that I have presented at a few events. The time has come for me to turn these threats into reality, so this series of articles and accompanying videos will explore every trick we know on how to improve your Windows logon times. As many of you know, I work predominantly in Remote Desktop Session Host (RDSH) environments such as Citrix Virtual Apps and Desktops, VMware Horizon, Windows Virtual Desktop, Amazon Workspaces, Parallels RAS, and the like, so a lot of the optimizations discussed here will be aligned to those sorts of end-user computing areas…but even if you are managing a purely physical Windows estate, there should be plenty of material here for you to use. The aim of this is to provide a proper statistical breakdown of what differences optimizations can make to your key performance indicators such as logon time.

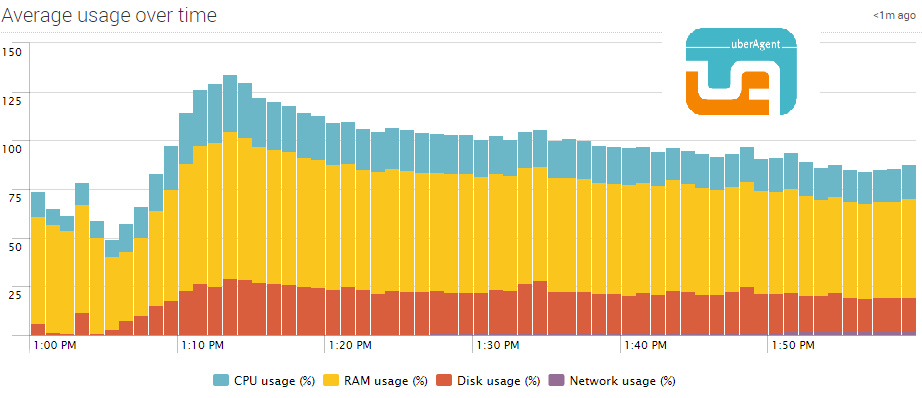

This series of articles is being sponsored by uberAgent, because the most important point to make about logon times is that if you can’t measure them effectively, then you will never be able to improve them! uberAgent is my current tool of choice for measuring not just logons (which it breaks down into handy sections that we are going to use widely during this series) but every other aspect of the user’s experience. All of the measurements in this series are going to be done via uberAgent, and as it comes with free, fully-featured community and consultants’ editions, there’s absolutely no reason that you can’t download it and start using it straight away to assess your own performance metrics. I’ve written plenty about uberAgent on this blog before, and I stand by it as the best monitoring tool out there for creating customized, granular, bespoke consoles that can be used right across the business. I’ve recently deployed it into my largest current client, so you can be sure I am putting my money where my mouth is – if it didn’t do the job, I wouldn’t have used it for my customers, simple as. Go and try uberAgent right now – you won’t regret it!

Part #1 – introduction

Logons and Time To Operational Effectiveness

I think it is very important to start by thinking about what we mean by logon times. I think it is actually particularly important to quantify this somewhat. The “logon time” KPI deals with the time it takes from when a user sits down in front of a device and submits their credentials for authentication, to when they can effectively start to use the system. A lot of things happen in this time period – they are authenticated, their profile is loaded, their session is initialized and set up, policies are applied, etc. But from the user perspective, it’s just “dead time” and as such we need to minimize it.

With that in mind, we need to approach the “logon” KPI from a user perspective. Administrators often use some smoke-and-mirrors to improve logon times – for instance, they might “offload” aspects of the logon process until later on, or they might connect to an already-active session to give the impression of a speedy logon. We are going to take into account tricks like this when doing our testing – from both perspectives. We will use uberAgent to measure all of the logon times metrics (which indicate a logon has finished when Windows records it as successful), but we will also do some “human” testing in parallel. This is to address instances where the Windows logon may actually have finished (such as with the offloading trick), but if the system is still not usable (maybe the offloaded task is hammering the CPU and/or memory), we will take this into account and note it onto the logon time measurement as an addendum. On the flip side, though, if there are tricks we can use to improve the logon time from the user perspective (such as connecting to a pre-prepared session), we will also note these as positive addendums to the logon metrics. Essentially, we are trying to assess the “time to operational effectiveness” for the user rather than being strict about dealing with only the logon time measurement.

We will record videos of each of the stages of testing that are done, host them on YouTube and directly link them from the relevant articles. That should help you assess first-hand how effective everything is, not only purely statistically, but also from that “TTOE” perspective that we mentioned.

How important is the TTOE?

This is an interesting point. I have to say, we can often get ourselves into an arms race when it comes to logons. It can become almost obsessive to try and shave more time off, to improve the experience for the users. But how much good are we really doing by taking it to the extreme – and what if (hat tip to Joe Shonk here) we are actually doing the opposite?

There are some industry verticals where logon time, or TTOE, is absolutely vital. Healthcare goes without saying – seconds can mean life or death. Finance, particularly investment banking, is another vertical where they care deeply about these metrics – speed of response can translate into thousands, maybe even tens or hundreds of thousands, of dollars. For particular sectors, it can definitely be argued that shaving every second off the logon time KPI is a viable pursuit with a net business benefit.

For other sectors though, we have to adopt a more nuanced approach. Generally, users will allow a certain period of time before they think a logon is “taking too long” and their attention wanders. This can, perversely, be proportional to what they are used to. Users who currently have two-minute logons may well find that a fifty second logon feels much faster. But a difference of one or two seconds to an already-healthy logon time will probably not even be noticed.

As a rule of thumb, many authorities work on the premise that less than thirty seconds for TTOE is an acceptable logon. I try and take this a step further, and have my own little mantra when dealing with this

“Aim for ten seconds, accept twenty seconds, never go beyond thirty seconds”

Me, last year sometime

This serves me pretty well, and it generally means that if you achieve a logon of twenty seconds or less there’s no need to keep trying to bring it down further. If you get twenty seconds or under for your TTOE, then I’d not expend any further energies on it unless you work in an industry vertical where every second translates into real customer or business benefit. Of course, you still need to constantly monitor the logon time KPIs – if it suddenly trends upwards, you need to identify the cause of the uptick, and address it. Which of course is another reason you should go and download uberAgent right now!

Productivity gains

Another point that is worth bringing attention to is the oft-quoted anecdote about cost savings through improving logon time KPIs. We’ve all heard variants of it along these lines – 10,000 users who have two minute logon times see those times reduced to thirty seconds, which means every day your users are now doing 250 hours more work between them, saving you whatever huge number 250 extra man-hours per day adds up to over a year. It all sounds very impressive. But is it accurate?

Unfortunately not. User productivity doesn’t depend simply on when they get logged in and they sure as hell don’t stop being productive at the exact moment their shift finishes. There are just too many factors in play here to make such claims and actually expect them to be borne out in reality. I’ve never seen any documented proof that these sorts of cost savings are possible – even in call centres, which are arguably one of the most aggressively micro-managed environments I’ve ever seen.

However, what an improved logon time does give is improvement in user satisfaction. They don’t start their days being frustrated, and in general, a user’s acceptance of a solution is highly driven by logon time, because that’s invariably their first interaction with a system. Happier users are generally more productive, less likely to take time off, less likely to get involved in disciplinaries, etc. For these kind of statements, you can find data that backs them up. Improving the logon time should be done as part of a general focus on user experience to try and improve the working processes and satisfaction of the user base, and the reason logon time warrants a special focus is because it is usually the first potential bottleneck a user will encounter.

“Adjust your approach to the logon times KPI based around the needs of the business”

Me, today and every day

So ideally, you need to tailor your approach to logon times based around your business needs. If you do work in an industry sector where every second can make real differences to your service, business or customers, then it is perfectly acceptable to aim for the minimum possible TTOE (which is probably about five seconds, in an absolutely perfect environment). But if you do aim this high – just be careful about the old adage about the road to hell being paved with good intentions. You need to make sure that every optimization you apply doesn’t have knock-on effects that break other aspects of your environment (yet another good reason to have a monitoring system like uberAgent in place – just saying :-))

But if you aren’t in a sector where every second counts, as I said, aim to get close to ten, but accept anywhere under twenty. If it creeps up past twenty towards thirty, then find out the reason for the uptick (uberAgent will help), and put remediations in place. Don’t obsess about your logon times when you’re under twenty seconds – the law of diminishing returns means you will not likely make any difference that your users will notice, and as I mentioned earlier when we talked about improving user satisfaction, that’s the key part. If you drop from one minute down to twenty seconds users will definitely notice and be happy about it, but if you then drop from twenty to fifteen, they’d likely not even think anything had changed.

Baselining

Now what is very important is for us to establish some baselines. We can’t hit an absolute “worst case scenario” baseline right now because we are going to run through lots of different scenarios and we may have to place artificial load onto the systems for each different scenario we look at, but we do need to see what we consider a typical “unoptimized” metric. So for instance, these initial baseline times don’t include anything like Citrix or RDSH being installed, security software being installed, etc. – we will add and test those at an appropriate time.

The operating systems we will use are Windows 10 2004 Enterprise version (fully patched at time of testing) and Server 2019 Standard RDSH (also fully patched at time of testing). We are also going to always measure a “first” logon (so that means without a pre-existing user profile on the machine) because that’s what we generally see in non-persistent RDSH environments. As for installed applications and policies and all the other factors, we will change these based around the specific testing we are doing to try and demonstrate the differences, but vanilla versions of Windows 10 2004 and Server 2019 RDSH are going to be our “bottom-line” systems. Each system will be measured ten times to establish an average logon time metric, but we will also add in any weighting of the average based around what we observed in TTOE “naked-eye” testing as well.

At the end of this series, hopefully we will be able to show what we can achieve as the maximum possible optimization. In addition, we may be able to show that some optimizations might not be as effective as we possibly thought they were!

With all this said, the current uberAgent baselines for the two systems we will be doing our majority of the testing on look like so:-

Windows 10 2004 averages a logon time of just under one minute (59.14 seconds, to be precise). This is *significantly* better than previous Windows 10 iterations and just goes to show how much it has improved the logon experience by removing the huge amounts of UWP apps. No observations were made around the TTOE (which was to be expected at this stage, as we haven’t tried anything clever yet!)

It is worth mentioning here how easy it is to extract this data from uberAgent – simply pop in a filter for a date/time range and/or user, and away you go. At one point I even received data from an incomplete logon that was still in progress as well 🙂

Next we did the same tests on a Server 2019 instance. uberAgent saved my bacon again here, because I inadvertently logged on to the Windows 10 instance by accident – so I just quickly tweaked my filter to concentrate on the RDSH hosts only. Server 2019 does (as we’d expect) better than Windows 10, with an average of 39.64 seconds. Again, and fully expected, no significant change from the logon time metric to the TTOE.

Now I know this is simply our “vanilla” baseline (which will obviously go up as well as down), but there is one interesting observation you can make straight away from the uberAgent statistics here. Note the very first logon for each batch of testing – these statistics are provided chronologically from bottom to top, so look at the bottom of the list. Both of these statistics (73.48 seconds for Windows 10, and 45.05 seconds for Server 2019) are higher than the average and quite noticeably out of step with the rest of the data. So Server 2019’s first logon is 13.6% slower than the average recorded hereafter, and Windows 10’s first logon is 24.4% slower than the average recorded hereafter. We will delve more into this later in the series, but this shows us the inherent value of studying statistics clearly rather than just relying simply on the average – and that using uberAgent has already given us some actionable intelligence even after the first set of baselining.

Summary

That’s it for the introduction – for the next part of this series we are going to get into loading up our infrastructure to try and simulate problems, and see how much we can shave off our logon times by addressing them. Stay tuned!

![]()

Hi James – Great start to this series! When can we expect part 2? 😀

Was due last week, but due to having to get kids ready to go to senior school tomorrow, have been slightly delayed. Imminent is the word, I believe 🙂

Great stuff James…can’t wait to catch the rest of the series. This is an interesting subject for me as I can get caught up, OCD :-), in trying to shave off ever sec I can. But I have also ran into issues like what Joe Shonk talks about and I break something by over optimizing. Trying to find that middle ground.

HI James,

Thanks for all theses articles. Do you have specifics recommendations for installing uberAgent in a layering SBC 2016 environement ? OS layer ? Apps layer ?

Regards

Hi, I haven’t installed uberAgent into an App Layering environment, but I’d assume it would either go in the OS or platform layer. I have asked the uberAgent support team for clarification and will post when they get back to me.

Platform layer is the recommended way